3FS Optimization 01 | Server-Side Optimization

e.g. articlePhantom Force | High Speed File Series 3FSPhantom AI has designed a sample-reading file system, 3FS, that is ideal for deep learning training. 3FS uses Direct IO and RDMA Read to allow the model training to use a very small CPU and memory overhead in the sample-reading portion of the training to obtain a very high read bandwidth, eliminating the need to wait for the data to be loaded during the training process, and utilizing more of the GPU performance.

As we know, file systems are generally categorized into clients and servers. In a 3FS file system, the client is deployed on the compute node side and accesses the 3FS server deployed on the storage node side over the network. There are many optimization issues in this process. In this article, we will talk about the optimization of the server side.

To reduce memory copies and increase read throughput, the main operations on the 3FS server side are:

- Uses Direct IO for reads, reducing the number of copies from disk to Page Cache.

- Improve read throughput with AIO interfaces

- Data alignment on the client side to simplify interface usage

Direct IO

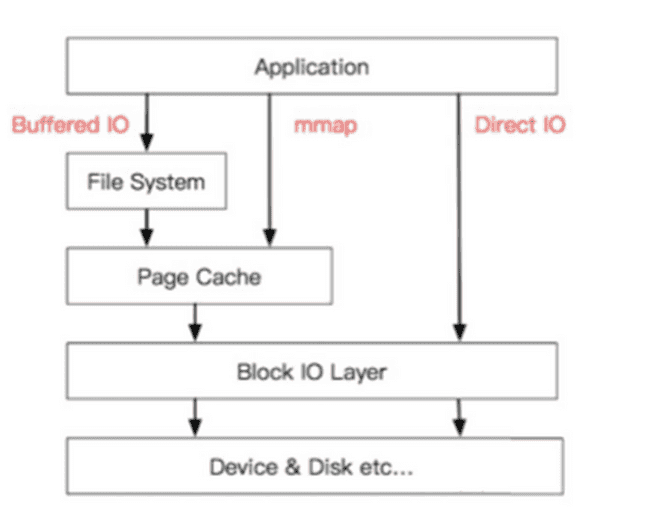

Linux provides two file IO modes, Buffered IO and Direct IO. Buffered IO uses the kernel-provided Page Cache to cache data on disk, which can bring significant performance benefits for data accessed multiple times. However, for deep learning training, each iteration reads new data, so caching is meaningless and Page Cache becomes a burden. Therefore, the 3FS server uses Direct IO to read data by default, bypassing Page Cache to reduce the number of data copies and reading data directly from disk to user memory, while avoiding the high memory usage of Page Cache.

AIO

AIO is an asynchronous IO interface provided by Linux that allows applications to submit multiple asynchronous IO operations to be executed concurrently in the background and wait for them to be completed synchronously in the frontend.Currently, AIO only supports files opened in Direct IO. Compared to the traditional synchronous IO interface, AIO can provide higher read/write throughput with lower CPU usage. Phantom AI's deep learning training framework uses the AIO interface for reading training samples by default. In addition, for synchronous read operations where the read size exceeds a threshold (the threshold is 1MB by default), the 3FS client will convert them to AIO operations to improve read performance. We are also in the process of adapting the new io_uring interface provided by the kernel, which we believe will bring further performance improvements.

line up in correct order

Direct IO has one major limitation in its use, it requires that the memory address of the buffer, the size of each read, and the offset be aligned to the logical block size of the underlying device, typically 512 bytes. In order to improve the usability of the interface, the 3FS client performs the necessary alignment for the AIO read operation by adding additional buffers to the header and tail of the buffer to achieve 512-byte alignment, and ignoring the header and tail when the RDMA Read receives the data. This achieves the following two effects:

- User AIO operations no longer need to be aligned, making them easier to use.

- User's synchronous IO operations can be directly converted to AIO operations on the client side without having to consider alignment and O_Direct, improving performance

wrap-up

In this paper, we present some of Phantom AI's thoughts on designing the 3FS file system for server-side optimization. We use Direct IO and asynchronous aligned reads to make the server-side data loading more in line with the usage scenarios of model training, thus obtaining better read performance.

It didn't happen overnight that 3FS could run with such performance. Phantom AI has encountered a lot of challenges in optimizing it in long-term practice. This series of articles will continue to share some small stories about 3FS performance optimization, as a brick to attract jade, which there is no lack of pits we have stepped on. Scholars and experts in the industry are welcome to discuss together.

- 本文作者: suopu

您可以转载、不违背作品原意地摘录及引用本技术博客的内容,但必须遵守以下条款: 署名 — 您应当署名原作者,但不得以任何方式暗示幻方为您背书,亦不会对幻方的权利造成任何负面影响。 非商业性使用 — 您不得将本技术博客内容用于商业目的。 禁止演绎 — 如果基于该内容改编、转换、或者再创作,您不得公开或分发被修改内容,该内容仅可供个人使用。