3FS Optimization 03 | Data Read Mode Adaptation

e.g. articlePhantom Force | High Speed File Series 3FSPhantom AI has designed a sample read file system, 3FS, that is ideal for deep learning training, which uses Direct IO and RDMA Read to allow model training to achieve ultra-high read bandwidth in the sample read portion of the program with minimal CPU and memory overhead, eliminating the need to wait for data to be loaded during the training process, and more fully utilizing the This eliminates the need to wait for data to load during training and more fully utilizes the computational performance of the GPU.

However, in practice, there are many problems that we did not anticipate before, such as the problem of tasks affecting each other, which is mainly caused by the different data reading modes of tasks. In this paper, we will describe the ins and outs of this problem and our solution ideas in detail.

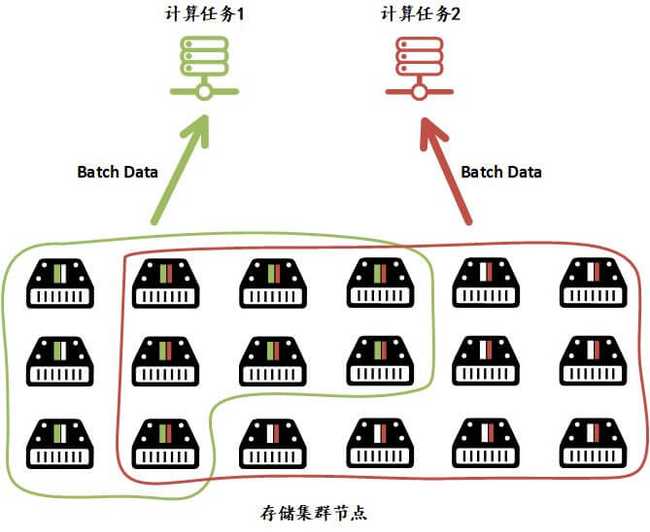

As we know, Phantom AI's deep learning platform provides deep learning training services with time-sharing scheduling, where users are free to configure resources and submit tasks on the platform. For the cluster, there will be multiple tasks training in the compute cluster and pulling data from the storage cluster at the same time. The reading mode of different tasks and the processing method will cause some impact on each other.

We found:

- If there is only one type of task in the system, each node can read decent performance even if there are more storage nodes in use;

- If there are other tasks with different read modes scheduled to run on it, even if the total bandwidth of 3FS isn't running full, the read bandwidth of those previous tasks will drop drastically.

As shown in the above figure, there are two compute tasks on the compute cluster side simultaneously reading batches of data from 3FS to train the model. The read mode of task 1 can only read data from a part of the nodes in the 3FS storage cluster because the cluster is load-balanced, and reading data from each node accounts for about 2/3 of its bandwidth. the read mode of task 2 can read data from most of the nodes in the 3FS storage cluster, and load-balanced to each node, and those nodes that support task 1 are left with 1/3 of the bandwidth for task 2. The nodes that support task 1 have only 1/3 of the bandwidth left for task 2, which prevents the nodes that do not support task 1 from utilizing the bandwidth even if they have spare bandwidth.

We analyzed the read pattern of the affected compute task 1 and found that each sample it reads is very long, around 24MB, and each batch reads data from only a small number of files. With a default chunk size of 2MB, this task reads from about 40 or so storage service nodes in a single batch, whereas other tasks similar to Compute Task 2 read from close to 60, or even all 64, storage service nodes. With limited total bandwidth on a single storage service node, a task that connects to fewer storage service nodes has more bandwidth requirements divided among each single storage node. When multiple tasks are requesting 3FS to read data, the full load will result in a situation where the demanded bandwidth of some tasks cannot be satisfied, which in turn affects the data reading performance on the compute node side.

Therefore, for any task reading data from 3FS, it is important to make it possible for as many of the 64 storage service nodes as possible to be able to read the data at the same time, which in turn reduces the bandwidth requirement of a single storage node. We reduced the block size to 512KB and added a control on the number of servers to which the file is distributed, which eventually solved the problem successfully.

- 本文作者: suopu

您可以转载、不违背作品原意地摘录及引用本技术博客的内容,但必须遵守以下条款: 署名 — 您应当署名原作者,但不得以任何方式暗示幻方为您背书,亦不会对幻方的权利造成任何负面影响。 非商业性使用 — 您不得将本技术博客内容用于商业目的。 禁止演绎 — 如果基于该内容改编、转换、或者再创作,您不得公开或分发被修改内容,该内容仅可供个人使用。