Alphafold Training Optimization 03 | Pitfall Diary

The previous two articles show the optimization of Alphafold by Phantom AI, which adopts two ways of feature preprocessing and feature cropping to improve the performance of Alphafold data processing, and further improves the training speed of the model through parallel training acceleration artifacts, and deeply integrates Alphafold into the characteristics of Phantom AI's clusters to maximize the computational performance.

So as a whole, what else needs our attention to train Alphafold on Phantom Firefly II, and how to optimize the same type of deep learning model in the future? On these topics, this issue of the article will talk to you about some of the thinking of Phantom Cube AI.

model store:https://github.com/HFAiLab/alphafold-optimized

Overall optimization effect

First, let's look at the final Alphafold training results on Phantom Firefly II after performing the first two optimizations. We used 16 nodes with a total of 128 A100 graphics cards to perform a relatively complete Alphafold training on nearly 70,000 protein sequences, and obtained the following experimental results:

| Average GPU utilization | 90.14% |

|---|---|

| Average time per Epoch (min) | 84.15 |

| Average time per iteration (s) | 11.32 |

| Data loading time per Epoch start (s) | 197 |

| Total number of iterations | 35680 |

| Total training hours (h) | 112 |

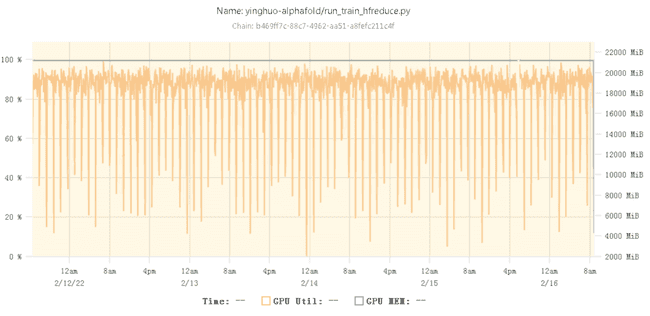

The GPU utilization curve during training is as follows:

The GPU utilization during training is around 90% most of the time. It can be seen that after the various optimizations mentioned above, Alphafold has been able to train efficiently on the Phantom AI platform, while the occasional dips in utilization in between are due to the time-consuming data loading at the beginning of training.

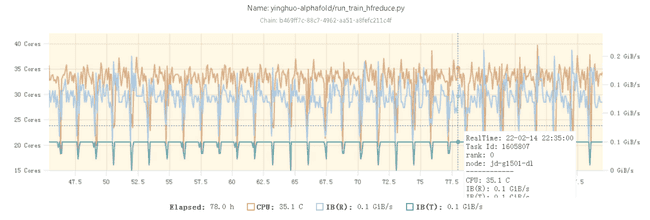

In addition, as can be seen from the CPU utilization graph, the CPU utilization during the entire training process has remained greater than 50% (35/64 cores) for a long period of time, indicating that there is indeed a very high demand for CPU arithmetic during Alphafold training.

Some stepping stones in multi-card training

In the process of optimizing the Alphafold model, especially when expanding from a single machine with a single card to multiple machines with multiple cards, we encountered quite a few problems.

1. OpenFold code issues

Deepmind has not open-sourced the Alphafold training code, and the mainstream Alphafold training code on github is now implemented by other three parties, such as OpenFold (https://github.com/aqlaboratory/openfold), its relatively complete reproduction via PyTorch of Deepmind's open-source Alphafold 2 (https://github.com/deepmind/alphafold)。

The OpenFold replication uses a more complex code to construct the model structure, in which different protein sequence data may not have exactly the same forward propagation path during training, which leads to a portion of the parameters not participating in the gradient computation on some machines during multi-card training, while the Pytorch DDP provides thefind_unused_parametersThe method doesn't find this part of the parameter correctly, so it will report an error from time to time and can't train properly with multiple cards.

After a period of troubleshooting, we found that there are some Losses in the Loss Function section that do not participate in the calculation, and we need to manually annotate them before we can do the multi-card training normally.

2. Environmental issues

Another easy pitfall is the environment. The Phantom AI platform has built-in environments such as PyTorch, which is adapted to common deep learning machine learning. And Alphafold itself, as a new direction in the field of deep learning, relies on an environment that is necessarily different from the conventional environment.

Because the Phantom AI training platform adopts a computing and storage separation approach, the environment is uniformly configured and does not host mirrors, so Alphafold's runtime environment needs to be re-installed step by step, and here a series of problems such as conflicting with the original version of the environment and the need to replenish the system libraries will inevitably occur.

So, we created a brand new virtual environment entirely via haienv and installed the Alphafold dependencies. However, the problem of NCCL Error occurs during multi-computer and multi-card training. After some troubleshooting, we found that because haienv created a brand new environment with a brand new Python bin file that was different from the original one, and the newly created Python did not get IPC Lock, the NCCL problem occurs during multi-computer multi-card training. You need to use the setcap command under sudo privileges.setcap cap_ipc_lock+iep /venv/openfold38_0/bin/pythonto solve the problem.

Finally, we have listed the environments that Alphafold depends on separately for the user to call.

Limitations and prospects

Although the training efficiency of the model itself is already very high after our optimization, with GPU utilization reaching over 90%, we still made some trade-offs on the model performance, and there is still a lot of room for optimization within the model. On the one hand, the optimizations we have done are based on the premise of not modifying the internal structure of the model, so it is difficult to deal with the extra overhead caused by the differences between different parts of the model; on the other hand, the utilization of the video memory during the training is still not ideal: the A100 40GB of memory can only be used up to 21GB with nearly half of the memory free, but the batch_size can only be set to 1, which causes a lot of wastage. This results in a lot of waste.

Starting from the above two perspectives, there are three more directions of optimization that can be carried out for the subsequent Alphafold model:

- Structural simplification: While Alphafold 2 itself achieves good protein prediction accuracies, its complex model structure and extremely high resource usage make both reproducing and deploying model inference a high threshold. Therefore, it is still possible to consider a careful Profile of the model internals, seeking a certain degree of simplification of the model structure.

- operator optimization: Phantom Cube AI has a lot of accumulated experience in operator optimization, especially for Transformer class models, while Alphafold, as a model also based on Transformer structure, has not yet used these operator optimization techniques. Therefore, there is room for further optimization here as well.

- model parallelismAlphafold's memory usage during training is still not high, and the batch_size of a single card can only be set to 1. Consider applying more model parallelism to Alphafold, such as combining it with Pipeline to increase the batch_size of a single card during training to increase the efficiency of memory usage.

Data scientists and developers are welcome to work together to research and build.

- 本文作者: suopu

您可以转载、不违背作品原意地摘录及引用本技术博客的内容,但必须遵守以下条款: 署名 — 您应当署名原作者,但不得以任何方式暗示幻方为您背书,亦不会对幻方的权利造成任何负面影响。 非商业性使用 — 您不得将本技术博客内容用于商业目的。 禁止演绎 — 如果基于该内容改编、转换、或者再创作,您不得公开或分发被修改内容,该内容仅可供个人使用。