A bit of our practice in reducing network congestion (I)

For deep learning developers and researchers, high-performance computing power is an important weapon to help their research succeed. As for the factors affecting the speed of deep learning training, it is often easy to overlook the important role of network transmission in speeding up training. Especially in large-scale clusters, distributed training scenarios, network congestion may directly lead to the failure of the GPU arithmetic, just as there is a section of two-way 8-lane expressway, but if the road planning is messy, the highway can only be reduced to a large parking lot.

In this issue, we share a bit of Phantom AI's thinking and optimization in this direction on the topic of networking.

Let's talk about the network topology first

In the phantom firefly cluster, the large-scale machine learning training task has very high performance requirements for communication and data reading, so we use a high-speed network, which is commonly used in high-performance computing nowadays, to build the interconnection between nodes. How to arrange the network cables and switches of the high-speed network, this is the first problem we need to consider - network topology.

Building a network topology is a bit like building a road; not only do you have to make sure that any two nodes can access each other, but you also have to make sure that packets from each node can be sent out as far as possible.unobstructedThe destination is reached.

与unobstructedThe opposite concept isblock up. Here's an example of a traffic jam in life to understand congestion, assuming that all vehicles now have the same maximum speed and will drive at the maximum speed they can run.

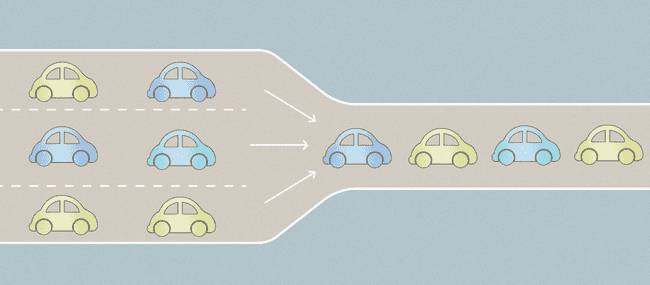

- There are three lanes of traffic at an intersection, with a steady stream of cars coming in but only one lane going out. If the cars in all three lanes follow the alternating pattern, then the efficiency of the three lanes is only 1/3; this is congestion;

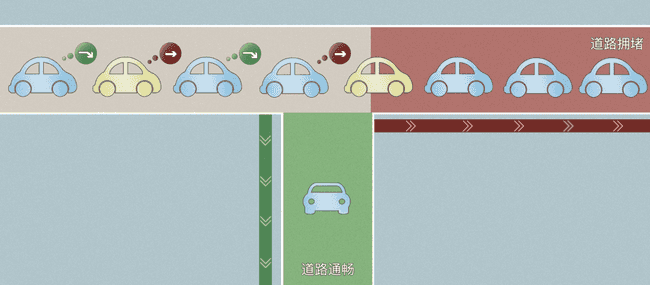

- If there is a dinky intersection in front of one of the three lanes of traffic, the straight ahead and the right turn are in the same lane. The traffic going straight will go to the congested lane, while the traffic turning right will go to the other smooth lane, but since the right-turning car will be blocked by the straight-turning car, assuming the right-turning and straight-turning cars happen to be alternating, the efficiency of this lane is only 2/3. This is congestion diffusion.

In order to avoid traffic jams as much as possible, it is necessary to have a good road plan. The same goes for the network topology.

Fattree is a common network topology used today for large AI clusters, such as NVIDIA's Super Pod.

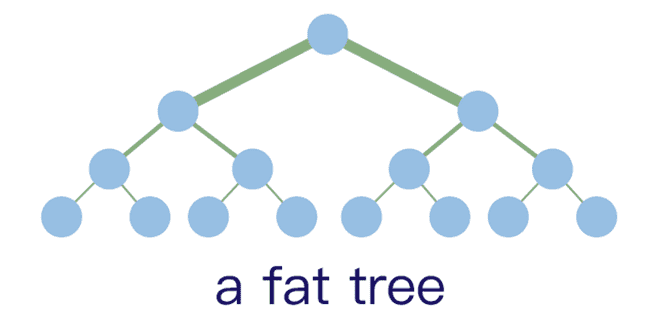

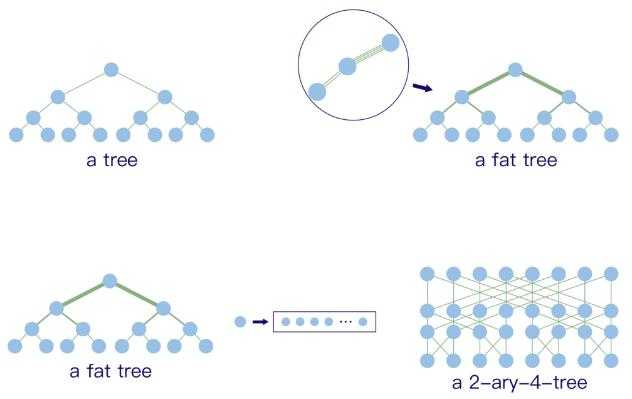

The prototype of Fattree, shown above.Multiple switches are connected in a tree, with different layers having different numbers of switches, and the bandwidth of the cables varying in equal proportions from layer to layer, but the total bandwidth of each layer is equal.. This is a non-blocking network topology. Let's still understand this topology in terms of a traffic jam, where each switch can be understood as an intersection. All I have to do is add an extraordinarily high number of lanes to the intersection in the center of the city, and I'll be less likely to get stuck in traffic.

The routing rules for this topology are also simple, since there is only one shortest path between any two nodes of the tree. The path from point a to point b is straightforward using the shortest path.

However such a topology is clearly impractical, it is too demanding on the network cables and switches. Since we don't have cables with such a large bandwidth, we can combine multiple cables and use them as one large cable; since we don't have switches with such a large bandwidth, we can split the large switch into multiple smaller switches. This evolved into the Fattree shown below.

Compared to the Fattree prototype, the Fattree shown above introduces a new problem: when a packet is to be sent from one of the switches to another, there are often many possible paths, so how to determine which one to take?If a large number of packets all need to pass over the same line at the same time (link multiplexing), then congestion will still be generated. In Phantom Fire's experience, link multiplexing may reduce the overall traffic of the cluster to half of what it normally is, or even less.How to reduce the occurrence and the scope of congestion by rationally planning the path of traffic is the problem that routing algorithms need to solve。

Routing algorithm for MLNXSM

Nowadays, high-speed networks are basically managed by MLNXSM provided by Mellanox. MLNXSM provides routing algorithms for Fattree topology, although it is not described in detail in the documentation and the code is not open-source, it is well known that MLNXSM is a magical modification of OpenSM, and interested readers can go through the source code of OpenSM to understand its details.

Core ideas

The routing rules for high-speed network switches are such that there is a port forwarding table on each switch thatThe packets are forwarded to the corresponding port according to the destination of each packet.. And the routing algorithm is to generate this port forwarding table for each switch.

OpenSM's Fattree assigns a downlink to each leaf node, i.e., each NIC, at each layer of the Fattree, and all traffic arriving at that layer to that target NIC goes down through that downlink. At the same time, these downlinks are load balanced so that the number of target NICs assigned to each downlink is as equal as possible. This reduces link multiplexing between traffic from the upper layers to the lower layers. Ideally, if the bandwidth between Fattree's layers is equal, i.e., the ratio of cross-sectional traffic is 1:1, link multiplexing from top to bottom can be avoided altogether. Thus, congestion would only occur in bottom-to-top traffic, and the spread of congestion would be smaller.

This sounds great, but we still encountered some problems during the practical application of MLNXSM's Fattree algorithm.

Problems encountered in actual use

- The actual network topology of Firefly II is not a standard Fattree; in addition to the computers and storage machines, we also have a number of test machines, development machines, and machines used to manage the cluster, etc. They have much lower bandwidth requirements, so we use more bandwidth cards or other means to connect them to the core network. They have much lower bandwidth requirements, so we use lower bandwidth NICs or other means to connect them to the core network. MLNXSM's Fattree algorithm doesn't handle this very well, and it doesn't compute routes based on the type of application the machine is in.The result is that since the total bandwidth of our machine's NICs is greater than the cross-sectional bandwidth of Fattree, the routing table computed by MLNXSM often assigns more than two NICs to the same link, and if both of these NICs happen to be the more heavily loaded NICs, congestion will result.

- MLNXSM's Fattree algorithm produces a routing table that has a severe load imbalance, with the top labeled switches taking more of the load. This is presumably due to the order in which the switches are enumerated.

- Mellanox switches have a very advanced feature called sharp that allows some of the computation to be done within the switch. In practice we don't turn on sharp at the moment, but the routing algorithm still calculates the load for the sharp nodes.

- If there are several Fattrees that need to be connected to each other, the behavior of the routing algorithm will be difficult to control.

Some of our practices

In order to address these issues mentioned above.Phantom AI has developed its own routing algorithm adapted to the characteristics of the Firefly II。

The main ideas are as follows:

- Logically slice the overall network into parts of the standard Fattree and other parts.

- For the portion of the network topology that is a standard Fattree, an independent top-to-bottom link is assigned to the ports on each leaf switch using a similar idea to the Opensm Fattree algorithm described above.Making top-to-bottom traffic completely free of link multiplexing。

- For the other parts, forwarding paths are computed using graph-theoretic algorithms such as shortest paths, but at the same time, the link needs to be taken into accountload balancing。

- For the part of the interconnection between Fattree and other networks, it is also necessary to do load balancing between the interconnecting lines so that each interconnecting line is assigned an equal number of destination nodes.

- While load balancing, you need to spread the traffic of individual applications across links based on the target machine type, maximizing the peak bandwidth of a single application.

- For NICs with different bandwidths, weights in equal proportion to the bandwidth are used for load balancing.

- For sharp nodes, only their connectivity is guaranteed, regardless of their load.

- NICs similar to interconnects and lower bandwidth are more likely to be a source of congestion.Centralize the traffic passing through them to some of the switchesThe impact of congestion can also be minimized if it occurs.

- When a network failure occurs, the load on the failed switch needs to be transferred to another switch.

After a long period of production environment verification on Firefly II, this set of routing algorithms developed by Mirage AI has solved the problem of the original MLNXSM routing algorithms not being suited to the Mirage cluster, and has significantly improved the efficiency of the cluster's network usage.

Better and more flexible routing scheme, phantom AI continues to continue to explore, welcome all parties to discuss with colleagues and scholars to join in the co-construction.

- 本文作者: suopu

您可以转载、不违背作品原意地摘录及引用本技术博客的内容,但必须遵守以下条款: 署名 — 您应当署名原作者,但不得以任何方式暗示幻方为您背书,亦不会对幻方的权利造成任何负面影响。 非商业性使用 — 您不得将本技术博客内容用于商业目的。 禁止演绎 — 如果基于该内容改编、转换、或者再创作,您不得公开或分发被修改内容,该内容仅可供个人使用。